Key Summary

The client is a Myanmar (Burmese) mobile financial platform primarily serving local users. The client needed to assess the UX of their mobile financial application to find out whether the current design is usable.

While the product was functional, there was no structured review identifying where the experience fell short of established usability standards.

Without a systematic UX evaluation, it is difficult for the team to prioritize improvements and strengthen the overall user experience.

Strategic Approach

Initially, the team considered conducting usability testing to uncover usability issues directly from real users. However, due to time constraints, language barriers, and recruitment challenges, especially since the majority of users were local residents who spoke languages other than English and Thai, we needed a more practical approach.

Given these limitations, we decided to conduct a heuristic evaluation as a time-efficient and cost-effective method to systematically identify usability issues.

I and my team reviewed key user flows, documented issues, categorized them by severity, and translated findings into structured recommendations for improvement.

The goal was not only to point out issues, but to provide clarity on what should be improved first and why.

Business Impact

The evaluation provided the team with a clear, prioritized list of usability issues, helping them move from assumptions to structured improvements. This helped align stakeholders on design priorities and enabled the team to focus on high-impact, quick-win improvements.

Key Takeaway

This heuristic evaluation helped the team quickly identify where the current design fell short of usability standards, providing a clear starting point for improvement. It helped the client to address critical issues in a structured and efficient way.

While heuristic evaluation cannot replace usability testing with real users, it proved to be a cost-effective and time-efficient method for diagnosing usability risks. When complemented by usability testing, it becomes part of a stronger, more comprehensive design evaluation strategy.

Full Case Study

Still curious about this project? Use this section below to see my complete end-to-end thinking process

Project Background

Our client, one of the mobile financial service provider in South East Asia, planned to launch a new version of their application soon. Initially, the client approached us for a development service. However, when our team has reviewed the application, we found the app's interface to be poorly designed, difficult to use, and there are many inconsistency across multiple design areas.

Our team proposed to help identifying areas of improvement on the interface design as this would ensure the design is usable and follows standard practices before launching it.

Note: At first, our team considered conducting a usability testing as the most proper solution to discover usability problems from real users. However, due to time constraints, language barriers, and recruitment challenges - Majority of the product's users are local residents who speak languages besides English and Thai, a more practical solution for this situation is to conduct a Heuristic Evaluation.

Overview Research Process

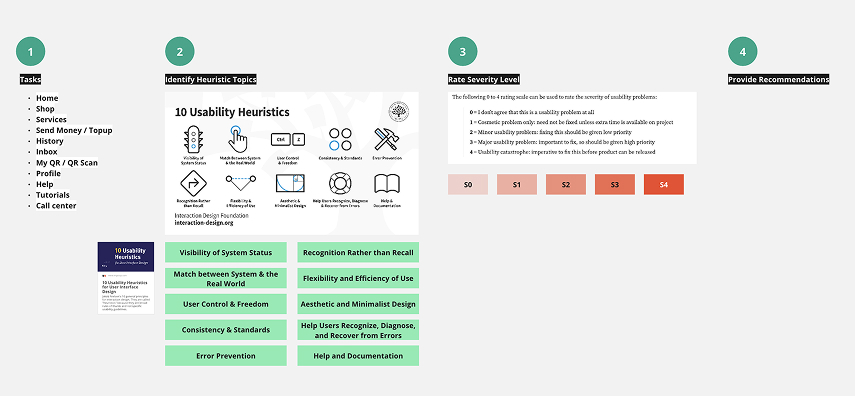

Plan

Define features to evaluate and metrics Select evaluators and Brief tasks

Gather & Analyze

Gather issues, Review them with the evaluators, and Summarize data

Apply

Develop summary report with recommendations

Plan

Plan the Evaluation

My role in this project was to manage the heuristic evaluation process, from conducting the evaluation to gathering issues and summarizing them into a report.

Typically, a heuristic evaluation is similar to usability testing in terms of the number of evaluators involved. For a heuristic evaluation, it's recommended to have 3 or more experts conduct the evaluation to uncover a comprehensive set of issues.

In this project, I assigned a total of 3 people (2 UX researchers, including myself, and 1 UX designer) to go through the application individually. Each person listed out the issues they found on a Miro board - using the Nielsen's 10 Heuristics and Severity Levels as a guideline.

The points that we had to list out included:

- Issues

- Heuristic Tool

- Severity Level

- The flow where the issue was found (e.g., onboarding, transferring money)

- Recommendations

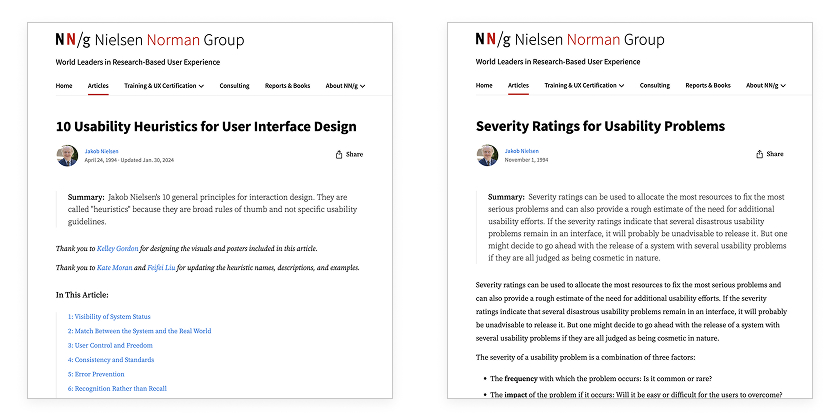

Miro board was used for briefing tasks to the evaluators

Nielsen's 10 Heuristics and Severity Levels were used as a guideline for evaluating the app

Gather & Analyze

Conducting Heuristic Evaluation

- We gathered issues that we found on a Miro board, organized by flows. This allowed everyone involved to review the data to see where our findings aligned.

- After letting each evaluator work through the evaluation individually, I scheduled a session for the evaluators to discuss and align on the issues found, the severity levels, and provide recommendations that everyone agreed upon.

Identified issues were placed in the Miro Board

Apply

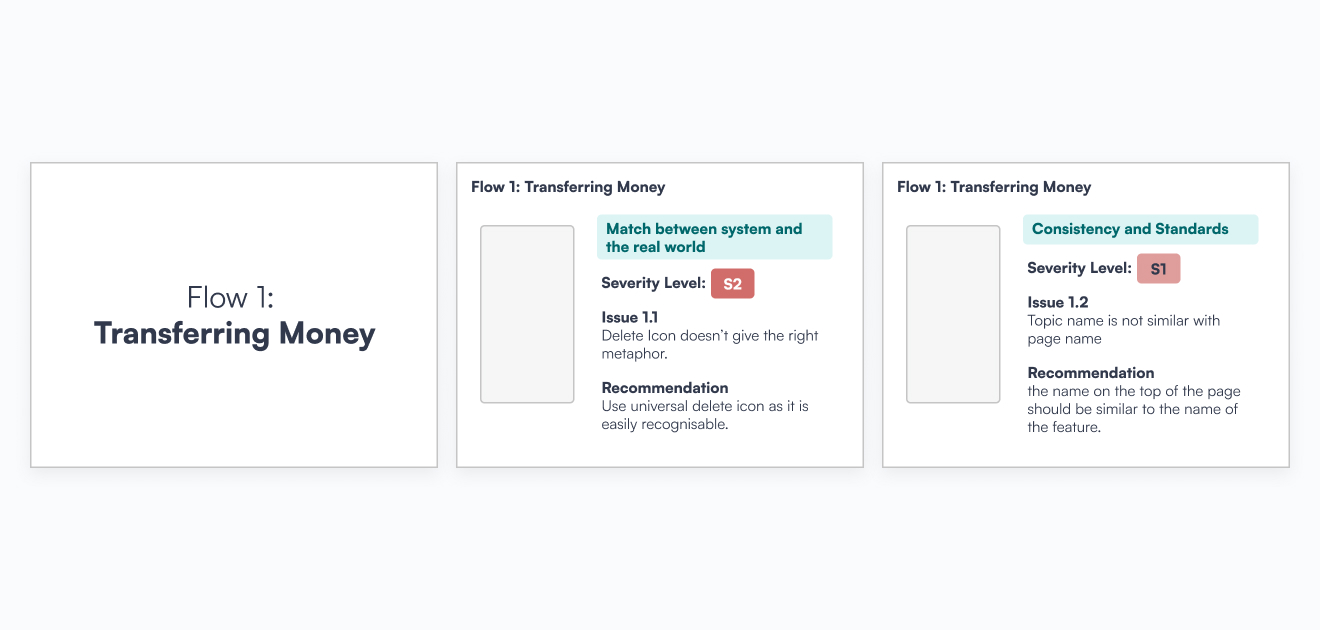

After conducting the heuristic evaluation, we documented all the findings into a single deck. Since our report audience includes the working team (designers) and C-level executives. we divided the report into 3 sections to ensure ease of use.

3 sections include:

- Executive Summary: Highlighting the most severe issues that require immediate changes, and an overview of the findings.

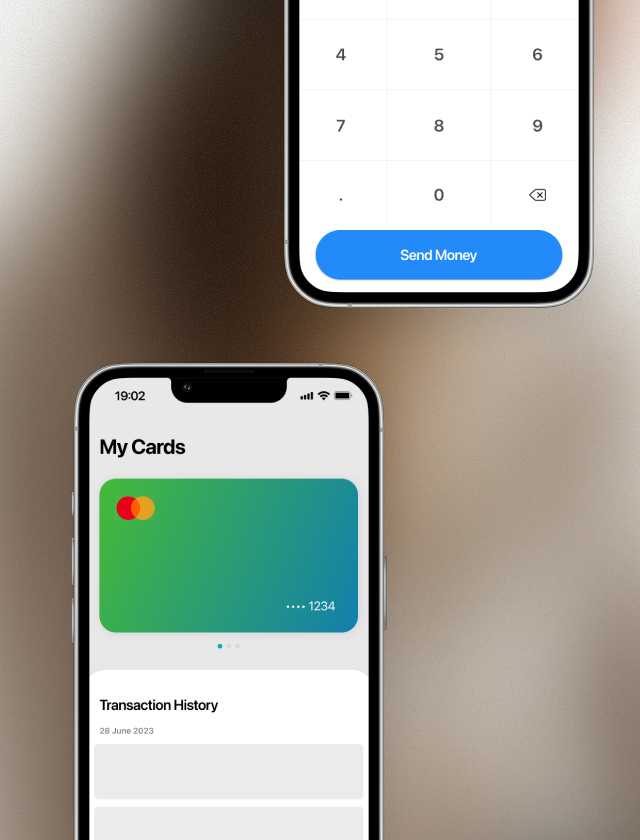

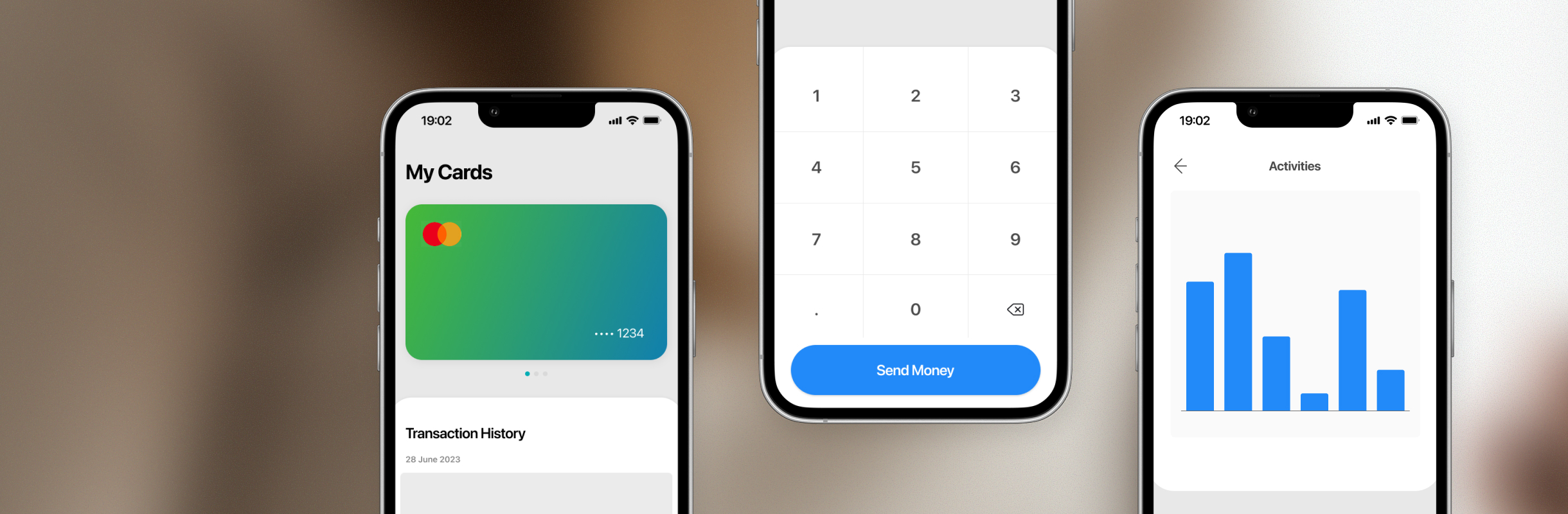

- Heuristic Issues by Flow: Presenting the identified issues organized by the app's flows, allowing readers to follow along with the user flow. We included

- The Identified issues with screenshots

- What heuristic(s) it violated

- Severity rating

- Recommendation

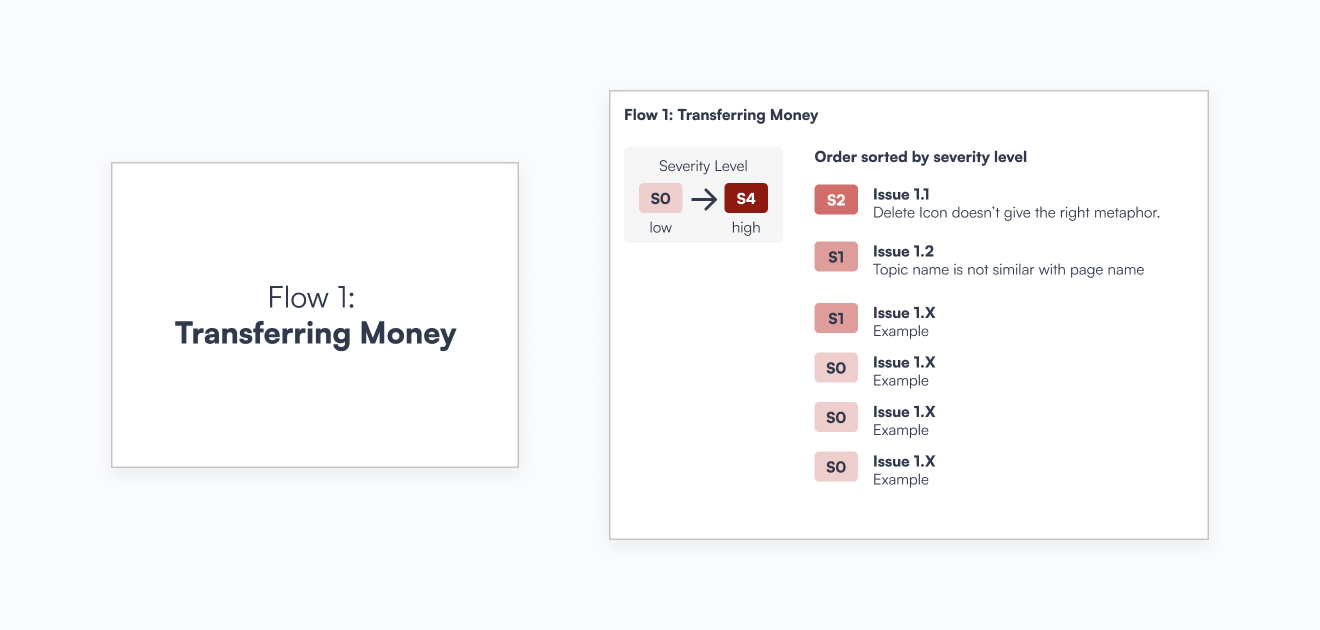

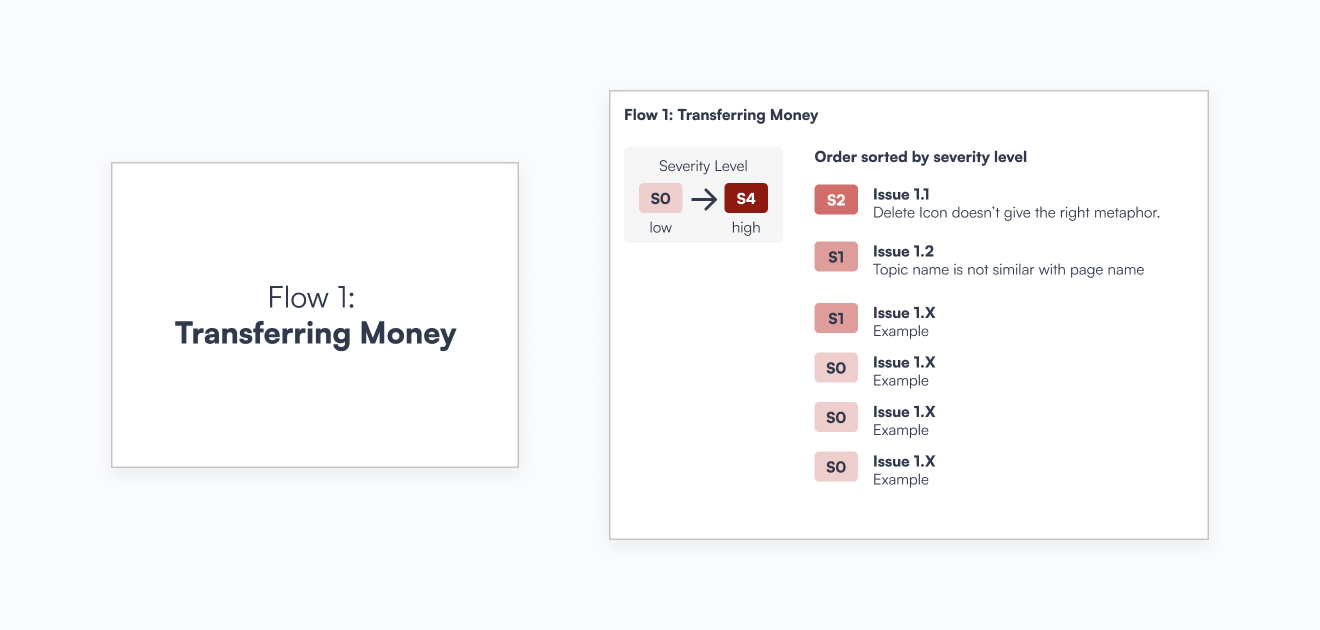

- Issues Sorted by Severity Level: Categorizing issues based on their severity levels, enabling readers to quickly identify high-priority issues that should be addressed first within each flow.

Examples (2) Heuristic Issues by Flow